A Hybrid Agentic AI Talent Platform for Automated, Transparent, and Scalable Evaluation of Job Applications

Abstract: In today’s rapidly evolving labor market, many organizations face mounting difficulties in efficiently and effectively identifying top job candidates when faced with potentially thousands of applications for each open role. The growing use of job-posting platforms, professional social networks, AI-assisted resume tools, and bot-generated applications is creating a significant transparency and data-processing burden for recruiting teams. Traditional applicant tracking systems (ATSs) and rule-based screening tools, though efficient for structured data, often fail to deliver high quality decision support, scalability, and more advanced levels of contextual understanding for job application analysis. As hiring processes become increasingly digital, new challenges emerge around data privacy, human and algorithmic bias, opportunity misalignment between candidates and roles, and a widening trust gap caused by opaque automated hiring practices. This study explores these limitations and proposes solutions to each, leveraging the strengths of traditional tools and artificial intelligence (AI), combining each solution into a hybrid agentic platform that integrates a variety of machine learning (ML) techniques and models, including large language models (LLMs), as well as privacy preserving methodologies. This multifaceted platform enhances evaluation of job candidates, reduces systemic bias, and strengthens transparency in early stages of candidate selection while improving efficiency, trustworthiness, and compliance with ethical and data-protection standards in large-scale recruitment.

Traditional Approaches to Processing Job Applications

Early-stage job application processing typically follows a structured, yet largely manual workflow centered on human judgment and basic automation. The process begins with the creation of a job requisition and the public posting of a role, followed by the submission of resumes and cover letters through online portals or ATSs. These systems organize and store applications but rely on predefined keyword filters or basic rule-based logic to identify candidates who appear to meet minimum qualifications. Human reviewers then conduct initial screenings, verifying certain attributes such as education, experience, or certifications, and removing candidates who do not meet baseline requirements. While ATS tools and resume parsers improve efficiency by helping manage high application volumes, they often depend on rigid pattern matching or rule-based criteria that may overlook qualified applicants who use nonstandard formats or language (Fuller et al., 2021). Due to the absence of standardization in resume review and scoring, evaluations are often inconsistent and subject to bias (Cohen et al., 2019). Additionally, the use of proxies such as degree or job title may mask true candidate potential, particularly for individuals from nontraditional backgrounds. Such traditional systems are limited in their ability to capture contextual nuance, adaptability, and fairness in candidate assessment. The cumulative effect is a process that is time-consuming, inconsistent, and prone to inefficiency, especially in competitive or high-volume hiring environments.

Challenges in Common Applicant Selection Processes

High Application Volume

The surge in job applications, fueled by “quick apply” features, the proliferation of online job posting sites, increased availability of remote work opportunities globally, and candidates casting wider nets in response to economic uncertainty, have left human resources (HR) departments contending with vastly larger resume inflows for open roles (Forbes, 2025). The high application volume introduces several challenges, including:

- Screening inefficiency. A large share of incoming applications fails to meet basic role requirements yet still consume HR department resources. This diverts valuable time and attention away from evaluating qualified candidates and reduces overall hiring efficiency, creating significant bottlenecks in the early stages of recruitment (Maree et al., 2020).

- Quality risk. Rapid and superficial screening using traditional rule-based automation and processing increases the risk of overlooking qualified candidates, especially if resumes do not match rigid keyword patterns or formats. Recruiters may advance the most obvious or easy-to-assess candidates, rather than those with greater long-term potential, undermining strategic talent acquisition (Dutta & Vedak, 2023).

- Delays and attrition. Excessive application volume can lead to delayed responses (or no responses), generic messaging, and minimal feedback that can serve to frustrate candidates, leading to unnecessary drop-offs or losses to competitors. This poor candidate experience can damage employer reputation, reduce applicant engagement, and discourage future applications, especially among high-quality candidates who expect timely, respectful treatment (Priyadarsini & S.S, 2025).

- Resource strain. Quantitative increases to inbound applications often translate to overwhelmed HR teams with higher risks of burnout or inconsistency in hiring. This pressure compromises the depth and fairness of application evaluation, particularly for non-traditional candidates (Fisher et al., 2021).

Collectively, these pressures diminish both the efficiency (time to hire, throughput) and effectiveness (quality of hires, fairness, candidate experience) in high volume recruitment.

Applicant Privacy

ATSs, resume parsers, and AI-enabled assessment systems routinely collect, store, and process extensive personally identifiable information (PII) and other sensitive data. While these tools help recruitment scale, they introduce substantial privacy risks if not managed with care. The primary risks in collecting PII are data breaches resulting from weak security practices. For example, in 2025 a vulnerability in McDonald’s AI hiring chatbot “Olivia,” developed by Paradox.ai, exposed millions of applicant chat records through weak administrator credentials. The leaked information included names, emails, phone numbers, and more (Wired, 2025). Legal and regulatory frameworks add further considerations. Under General Data Protection Regulation (GDPR) in the EU, certain data categories (such as health or race) are classified as “sensitive” and face stricter processing requirements (GDPR, 2016). Automated decision-making also requires transparency, consent, and rights to explanation. In the U.S., some state laws (e.g., Illinois’ biometric privacy laws) require explicit consent for collecting biometric data or using video interviews with facial recognition (American Privacy Rights Act of 2024, 2024). HR tools that process such data without clearly obtaining and managing consent risk violating privacy laws (NIST, 2023). Data retention and pseudonymization practices are often weak in ATS and other hiring tools. Many tools retain PII excessively or do not properly restrict identifying data in analytical systems. For example, a case study of an ATS used by Rangreen showed that both successful and unsuccessful candidates’ data (identifiers, demographics, and recruiting data) were stored, with pseudonymization and anonymization applied only under certain conditions and delays (ICO, 2025). Weak retention practices increase the risk of misuse, unauthorized access, or eventual data exposure.

Cognitive Bias of Recruiters

While both human reviewers and traditional ATSs remain essential to modern recruitment, each introduces significant risks of bias that challenge fairness and objectivity in hiring. Human evaluators are susceptible to conscious and unconscious biases shaped by social norms and prior experiences, often giving undue weight to factors such as race, gender, university prestige, or even hobbies and names. These subtle cognitive shortcuts can result in unequal treatment, where candidates who mirror existing organizational or cultural norms are favored over equally or more qualified candidates from underrepresented backgrounds. Traditional ATSs, designed to automate and streamline this process, are not immune to similar problems. Rule-based filters can unintentionally encode historical inequities, while systems trained on imbalanced or non-representative data perpetuate discriminatory patterns already embedded in past hiring decisions. Research has shown that linguistic and feature based models frequently reflect societal stereotypes, with word embeddings and keyword matching disadvantaging applicants from certain national or ethnic origins (Li et al., 2023). Likewise, studies of resume evaluation demonstrate that human decision makers systematically favor familiar or prestigious profiles, reinforcing structural inequities in access to opportunity (Moore et al., 2023). Beyond ethical concerns, such biases carry tangible risks, such as eroding candidate trust, damaging organizational reputation, and exposing companies to potential legal liability. Addressing these biases requires transparent, accountable systems and deliberate oversight to ensure that efficiency does not come at the expense of equity.

Opportunity Misalignment

In many organizations, candidate screening is tightly bound to role specific criteria. Rigid job requirements, narrow educational credentials, or fixed skill lists. This specificity often creates opportunity misalignment, where candidates who would be well suited for roles, teams, or levels other than those to which they applied are rejected. Given the high volume of applicants, most teams are unable to redirect candidates to more suitable roles within their organization. Traditional hiring tools often consider only keyword matches between a candidate’s resume and the applied job description. A candidate’s broader skill set, transferable skills, or potential adaptability across roles are not considered. Many organizations also retain “required qualifications” emphasizing formal education (e.g., degree, years of experience) rather than actual skills or potential. This limits the pool of applicants who can be considered for roles outside of their experience, even when they have relevant skills or could quickly learn. The consequences of opportunity misalignment are significant. Highly qualified individuals may never be considered for roles where they could add value, leading to frustration, wasted effort, and lost morale. For organizations, misalignment reduces talent utilization, limits diversity of thought, and undercuts recruitment ROI. Over time it can also contribute to higher turnover or gaps in filling roles. Research using data from the Organization for Economic Co-operation and Development (OECD) and the Program for the International Assessment of Adult Competencies (PIAAC) suggest economic costs to opportunity misalignment. It highlights that when workers are overqualified or underqualified, productivity suffers and overall economic output declines (Rathelot, 2023).

Model Interpretability and Trust Gap

Many modern ATSs operate opaquely, even to those who deploy them. Internal logic, feature weighting, ranking criteria, and thresholds are often hidden, either by design (e.g., proprietary models) or due to model complexity (e.g., deep learning or ensembles) that hinders interpretability. While these tools may offer efficiency and consistency in screening, their “black box” nature can generate a significant trust gap among candidates, HR practitioners, regulators, and the public (Ajunwa, 2020). A core driver of the trust gap is the lack of transparency around how decisions are made. Candidates often have no way of knowing why their application was rejected or advanced, whether due to keyword matching, inferred traits, or historical data patterns. This opacity breeds suspicion, reduces candidates’ confidence in fairness, and can damage employer reputation. According to the U.S. Department of Justice and Equal Employment Opportunity Commission (2024), many AI-powered hiring systems disadvantage candidates for unclear or undisclosed reasons, such as penalizing proxies for protected characteristics (e.g., race, age, disability) or failing to disclose algorithmic use.

Limitations with Existing Automation Tools

Automated hiring tools such as ATSs, keyword filters, and basic Natural Language Processing (NLP) are now widespread in recruitment. These tools streamline resume screening, reduce manual workload, and allow HR teams to manage applications at scale. However, despite accelerating the hiring process, these tools suffer from shortcomings that undermine both fairness and utility. Outlined below are the downsides to relying on traditional hiring tools:

- Limited accuracy on unstructured inputs. Traditional keyword-based parsers perform well only when resumes conform to expected templates or formats. Their high precision comes at the cost of recall, meaning that valuable candidates can be missed if their resumes use unconventional structure or wording. This rigidity becomes a serious drawback in real-world recruitment pipelines, where applicants often use visually distinct or creative designs to stand out (Zielinski, 2022; Maree et al., 2020).

- Rigid and brittle parsing logic. Classical rule-driven systems rely on hard coded patterns or regular expressions that must be updated whenever document structure changes. Even minor variations, such as substituting “Work Background” for “Professional Experience,” can break parsing logic. This lack of adaptability makes traditional tools fragile and limits their usefulness in global or fast evolving industries where resume conventions vary widely (Chiticariu et al., 2013).

- Poor support for complex file formats. Traditional parsers generally handle plain text or simple PDFs, but struggle with layouts containing tables, columns, charts, or scanned documents. As resumes increasingly incorporate infographics, visual timelines, and portfolio samples, legacy systems fail to extract relevant data accurately from such unstructured data. This narrow format compatibility restricts the diversity of candidates who can be fairly assessed (Gemelli et al., 2024).

- No contextual understanding. Rule-based systems treat words as isolated tokens and cannot interpret context, such as distinguishing a graduation date from an employment period when both appear near similar keywords. The result is misclassification of fields, timeline errors, and ultimately reduced trust in automated screening outcomes.

- Low scalability and adaptability. Extending traditional parsers to new markets or job types requires manual creation and testing of additional rules. As organizations expand across regions with different languages or resume conventions, maintaining consistency and accuracy becomes prohibitively labor intensive. This manual overhead limits scalability and delays deployment.

- Short-term cost efficiency, long-term inefficiency. While traditional systems may appear more cost effective to deploy initially, their cumulative maintenance costs rise steeply as resume diversity increases. Continuous rule updates, version control, and regression testing inflate total cost of ownership, making the approach economically unsustainable for large or multinational enterprises (Chiticariu et al., 2013).

Hybrid Agentic AI Talent Platform

Recruiting challenges (an overabundance of applications, data privacy vulnerabilities, cognitive bias, opportunity misalignment, mistrust in opaque hiring strategies, and limitations with traditional ATSs) can be resolved by taking advantage of the flexibility and contextual awareness of agentic AI. By leveraging the individual strengths of AI agents and ML into an integrated, cohesive system, an efficient pipeline for swift and fair hiring decisions can be built that resolves the issues outlined above. The platform is built upon the following:

- Resume Parsing. Accurately interpreting diverse formats, preserves structure, and adapts to new layouts, outperforming rigid rule-based systems.

- Deeper Analytical Evaluation. Understanding context and meaning in resumes to enable more accurate, inclusive, and efficient identification of qualified candidates.

- Automated PII Protection and Detection. Automatically safeguarding sensitive candidate data at scale while reducing bias and ensuring regulatory compliance.

- Fused ML-Driven Quantitative Scoring Method. Selecting candidates via reliable, interpretable, and resource-efficient candidate scoring for structured resume data, in support of fair and auditable hiring decisions in high-volume and regulated environments.

- Systematic Role Matching. Systematically analyzing candidate and job data, uncovering complex qualification-job relationships, and adapting to trends in order to improve fit, fairness, and recruitment efficiency.

- Uniform Evaluation Process. Promoting fairness and trust by standardizing evaluation criteria, removing demographic bias, and ensuring consistent assessment across all candidates.

- Chat Interface for Viewing and Filtering. Providing recruiters with an intuitive, conversational interface to filter and interact with candidate data, streamlining access, enhancing transparency, and supporting decision-making without replacing human judgment.

- Analysis Report Generation. Synthesizing unstructured data, highlighting relevant skills and nuanced qualities, and reducing recruiter cognitive load for more strategic decision-making.

- Customized Candidate Engagement. Enhancing engagement, satisfaction, and organizational reputation while reducing recruiter workload.

- Scalability. Enabling organizations to efficiently and consistently process large application volumes.

The following details each of the above features in a hybrid agentic AI talent platform:

Resume Parsing

AI significantly advances resume parsing by replacing brittle, rule-based methods with flexible, context-aware, multimodal models capable of interpreting diverse document formats, including text, tables, and PDFs. Unlike traditional regex systems that break with nonstandard headings or layouts, modern architectures such as LayoutLM jointly encode textual, spatial, and visual cues, enabling them to recognize equivalent fields and maintain structural context. For instance, these systems can recognize “Education” whether it is placed in a column or in an embedded table (Xu et al., 2020). Recent extensions using multi-granularity fusion further enhance extraction accuracy by capturing hierarchical relationships from token to table cell levels (Jiang et al., 2024). This approach yields concrete benefits, improving recall on irregular or OCR-processed resumes, contextual disambiguation of entities such as dates or roles, and reliable preservation of tabular data. Moreover, because these models learn from data rather than fixed rules, they can evolve through active learning and incremental labeling to accommodate new formats, offering a robust, adaptive alternative to the rigid maintenance demands of regex-based parsers.

Deeper Analytical Evaluation

AI systems employ semantic analysis and contextual understanding to evaluate resumes, which can overcome the limitations found in simple keyword matching and traditional ATSs (Abhishek, 2025; Bevara et al., 2025). By capturing the meaning behind words and phrases, recognizing synonyms, related terms, and industry-specific jargon, LLM-based AI can outperform traditional NLP tools when applied to understanding tasks (Ajjam & Al-Raweshidy, 2025). This deeper analytical capability enables more accurate assessment of candidate qualifications, ensuring relevant experience is not overlooked due to language differences (Lo et al., 2025). Integrating advanced AI capable of deeper analytical evaluation into the resume screening process addresses the limitations of traditional keyword and NLP-based ATSs. By understanding context and meaning in resumes, today’s LLM-based AI systems offer a more accurate, inclusive, and efficient method for identifying qualified candidates, leading to better hiring outcomes.

Table 1. A comparison between traditional and advanced AI tools in ATSs.

| Traditional Tools | Advanced AI Tools | |

|---|---|---|

| Accuracy | Works well if resumes strictly follow expected formats; high precision on structured input. | Handles unstructured, varied, or noisy resumes; higher recall and context sensitivity across formats. |

| Flexibility | Rigid: breaks when headings, fonts, or layouts change (e.g., “Professional Experience” vs. “Work Background”). | Flexible: models learn semantic equivalences (e.g., “Education” vs. “Academic Background”). |

| Formats Supported | Mostly text or simple PDFs; struggles with tables, graphics, or scanned images. | Multimodal: processes text, tables, and scanned PDFs; robust with OCR + layout-aware models (e.g., LayoutLM). |

| Context Awareness | Cannot disambiguate similar tokens (e.g., “May 2020” as employment vs. graduation date). | Uses context windows to resolve ambiguity, improving field classification and reducing errors. |

| Scalability | Requires manual rule updates for new resume styles; difficult to scale globally across industries. | Scales via retraining or fine-tuning with new data; adapts more easily across roles, industries, and geographies. |

| Cost | Low upfront cost, but maintenance effort is high long-term. | Higher upfront (model training, infrastructure), but lower marginal cost at scale. |

Automated PII Protection and Detection

AI-based PII detection and protection provide a scalable and reliable solution for managing sensitive candidate data while ensuring compliance with major privacy regulations such as GDPR, CCPA, and HIPAA (Asthana et al., 2025). These systems automatically identify and redact dozens of PII types, such as names, addresses, Social Security numbers, emails, and phone numbers across thousands of resumes, minimizing human error and improving efficiency (Thetbanthad et al., 2025). Integrated directly into ATS and HRIS platforms, AI tools can perform real-time scanning, redaction, and anonymization without disrupting recruiter workflows. Beyond compliance, obfuscating sensitive information reduces the risk of bias by allowing recruiters to evaluate qualifications and experience without exposure to protected attributes. Modern LLMs further enhance this process by accurately detecting PII in free-form text, outperforming traditional rule-based and NLP systems (Shen et al., 2025). Together, these AI safeguards combine automation, fairness, and security to protect candidate privacy, foster trust, and strengthen employer reputation in large-scale hiring environments.

Fused ML-Driven Quantitative Scoring Method

For scoring candidates based on parsed resume data, classical ML models often provide the most effective and reliable approach (Grinsztajn et al., 2022). Techniques such as logistic regression, support vector machines, and gradient boosting suit structured tabular data and can provide transparent, interpretable outputs aligned with HR decision making needs. Unlike large scale deep learning models, which may overfit, hallucinate, or require prohibitively large datasets, classical models perform well with fewer resources while remaining reproducible and easier to validate (Rudin, 2019). Their simplicity also facilitates auditing for bias and compliance, making them well suited for high volume, regulated environments where fairness, accountability, and efficiency are paramount.

Systematic Role Matching

In response to opportunity misalignment, AI-driven systems offer a systematic, data driven approach to matching candidates with suitable positions. AI powered matching engines analyze resumes, job descriptions, and historical hiring patterns to identify the most relevant candidates for a role (Rojas-Galeano et al., 2022). Using ML, these systems uncover complex relationships between candidate qualifications and job requirements that may not be obvious to human recruiters (Jiang et al., 2020). Moreover, AI systems also continuously learn and adapt to changing hiring trends, keeping the matching process up to date and relevant (Zhao et al., 2021). This dynamic adaptability allows organizations to respond more effectively to shifts in the job market and internal business needs. By providing a more objective and comprehensive analysis of candidate qualifications, AI-driven matching engines can help organizations make more informed and equitable hiring decisions. This approach improves recruitment efficiency and enhances hire quality by ensuring a better fit between candidates and roles.

Uniform Evaluation Process

Trust in the hiring process can be strengthened by utilizing AI for hiring decisions. AI systems bring fairness and equity to hiring by providing a more uniform application process, standardizing evaluation criteria, and focusing on objective qualifications. A Northeastern University study found that applicants perceive AI powered hiring as more fair when the algorithms are blind to characteristics such as race, age, or gender. This approach, called “fairness through unawareness,” removes demographic information from evaluation to prevent bias. The study showed candidates view companies using such AI tools more positively and are more motivated to apply (Northeastern University, 2024). AI implementation also promotes consistency across recruitment stages. By automating tasks such as resume screening and initial assessments, AI systems ensure that all candidates are evaluated based on the same criteria, reducing variability in decision making. This uniformity helps create a level playing field for all applicants, regardless of background.

Chat Interface for Viewing and Filtering

A chatbot connected to an HR resume database can serve as an intuitive interface for recruiters, enabling conversational, on-demand interaction with candidate data. Instead of navigating complex dashboards or static reports, users can query the chatbot to filter candidates by skills, experience, education, or other criteria. This natural language interaction lowers the learning curve for non-technical staff and accelerates decision making. With customizable filters and sorting, the chatbot empowers users to shape candidate shortlists, ensuring automation supports rather than replaces human judgment. Such a system streamlines access to large applicant pools while enhancing transparency and flexibility in hiring decisions (Dodda et al., 2025).

Analysis Report Generation

LLMs are well suited for generating resume analysis reports, synthesizing unstructured information into coherent, context-rich summaries (Gan et al., 2024). Resumes often contain diverse formats, terminology, and levels of detail, making them difficult to standardize at scale. LLMs excel at extracting relevant skills, experiences, and accomplishments, then presenting them in clear, recruiter-tailored narratives. Unlike rigid rule-based systems, LLMs adapt to domain-specific language and highlight nuanced qualities such as leadership or problem-solving from text (Vaishampayan et al., 2025). The result is a concise, customizable report that reduces recruiter cognitive load, enabling focus on strategic decision making while retaining a comprehensive candidate view.

Customized Candidate Engagement

AI enables more personalized, timely, and context-aware communication throughout the hiring process, transforming how candidates experience engagement at scale (Madanchian, 2024). Instead of relying on impersonal, one-size-fits-all updates, AI powered systems can tailor messages to each applicant’s stage, role, and prior interactions, crafting responses that feel thoughtful and human. Natural language generation tools can adjust tone and content to acknowledge specific skills, share feedback, or highlight relevant company insights, while AI driven chatbots and virtual assistants provide consistent, real-time support aligned with employer branding. This blend of personalization and automation not only enhances transparency and candidate satisfaction but also strengthens organizational reputation and reduces recruiter workload, ensuring no applicant feels overlooked. Research further indicates that candidates view AI assisted communication as both useful and easy to use, reinforcing its value in fostering positive, scalable recruitment experiences (Horodyski, 2023).

Scalability

To address screening inefficiency, organizations are adopting AI-driven solutions that provide scalability, efficiency, and consistency (Chen, 2022). AI-powered resume screening tools swiftly process large volumes of applications, identifying candidates whose skills and experiences align with job requirements. These systems reduce manual review time, enabling HR teams to focus on strategic decisions (Dima et al., 2024). Implementing AI in high-volume hiring processes enables organizations to manage large applicant pools efficiently while maintaining quality and consistency. By automating repetitive tasks and leveraging data-driven insights, AI enables HR teams to focus on strategic recruitment, leading to better hiring outcomes and stronger organizational performance (Madanchian, 2024).

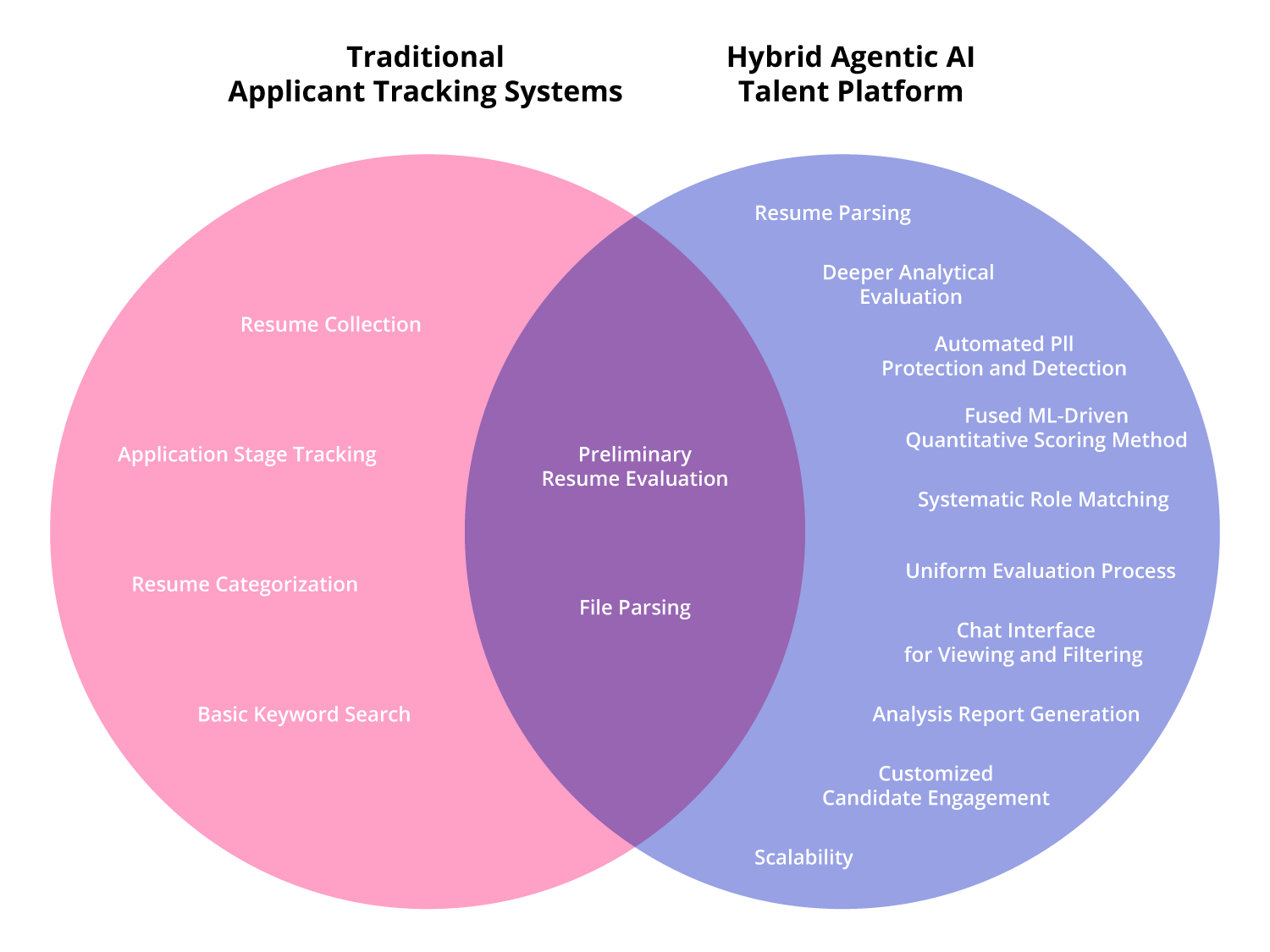

Figure 1: Comparison of traditional ATSs and the Hybrid Agentic AI Talent Platform in early-stage resume screening. Traditional tools primarily support administrative and organizational functions such as resume collection, application stage tracking, resume categorization, and basic keyword search, providing structure but limited analytical depth. In contrast, hybrid agentic AI systems extend these capabilities through multimodal resume parsing, criteria-based resume scoring, systematic role matching, privacy preserving data handling, and scalable automation. They also enable interactive communication via chat interfaces and generate detailed analysis reports for recruiters. The overlap between the two approaches, preliminary resume evaluation and file parsing, highlights the shared goal of efficiently processing candidate data, though AI driven methods achieve this with greater contextual understanding, adaptability, and consistency.

Conclusion

Modern recruitment sits at the crossroads of immense opportunity and escalating challenge. As applicant volumes surge and global labor markets continue to shift, traditional screening approaches struggle to preserve speed, equity, and contextual understanding. Throughout this paper, we examined the limitations of existing systems, from cognitive bias and data privacy vulnerabilities to opportunity misalignment and a widening trust gap between candidates and employers. These issues demonstrate that while automation has alleviated some inefficiencies, the status quo remains insufficient for recruiting environments where fairness, interpretability, and scalability are increasingly non-negotiable.

To address these gaps, this paper introduced a multi-layered, agentic AI system that combines ML, LLMs, and privacy-preserving methodologies into a unified pipeline for candidate evaluation. The Hybrid Agentic AI Talent Platform supports the hiring lifecycle from resume parsing and quantitative scoring to contextual analysis, systematic role matching, and personalized candidate engagement. By integrating automation thoughtfully with conversational interfaces and transparent decision pathways, organizations can transition from reactive filtering to proactive talent discovery, unlocking value that rigid ATS tools cannot. At its core, this approach emphasizes not just efficiency, but trustworthiness: anonymization reduces bias, semantic understanding elevates hidden talent, and adaptive learning aligns opportunity with potential.

While promising, the path toward fully equitable automated hiring demands continued vigilance. Risks remain around algorithmic bias, disproportionate exclusion of marginalized applicants, and the opacity of increasingly powerful models. Future research must prioritize explainability, robust auditing, and candidate rights to ensure that innovation strengthens, rather than weakens, public confidence in recruitment systems. Building strong governance around data handling and ethical AI deployment will be essential as the industry shifts toward deeper automation. If designed and adopted responsibly, the Hybrid Agentic AI Talent Platform described herein can equip organizations to scale hiring with both human dignity and operational excellence at the center of every decision.

ABOUT ENTEFY

Entefy is an enterprise AI software company. Entefy’s patented, multisensory AI technology delivers on the promise of the intelligent enterprise, at unprecedented speed and scale.

Entefy products and services help organizations transform their legacy systems and business processes—everything from knowledge management to workflows, supply chain logistics, cybersecurity, data privacy, customer engagement, quality assurance, forecasting, and more. Entefy’s customers vary in size from SMEs to large global public companies across multiple industries including financial services, healthcare, retail, and manufacturing.

To leap ahead and future proof your business with Entefy’s breakthrough AI technologies, visit www.entefy.com or contact us at contact@entefy.com.